*

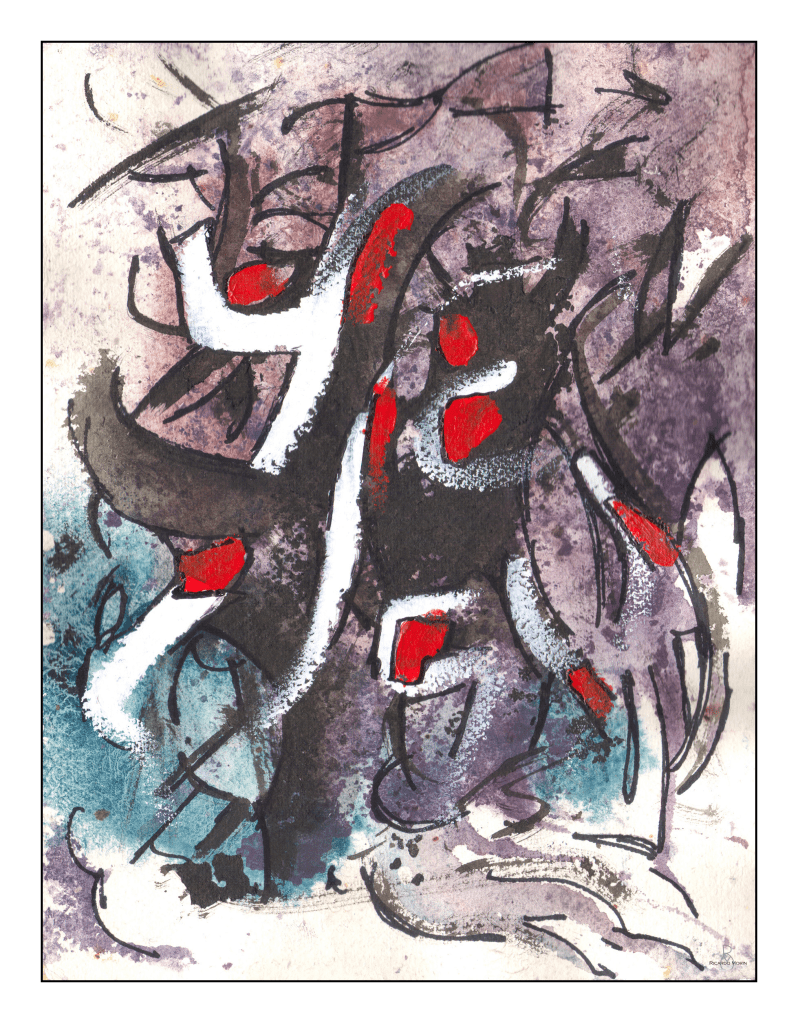

Triangulation Series Nº 2

37″ x 60″ x 2″

Oil on linen

2006

Ricardo F. Morín

March 10, 2026

Oakland Park, Florida

1

Modern societies describe progress through a vocabulary of invention and expansion. Yet the consequences often observed in economic life arise from institutional arrangements that precede the innovations themselves.

New technologies appear as discoveries; markets appear as opportunities; growth appears as the natural result of human ingenuity. This language creates an image of development that emphasizes creativity while it conceals a more durable structure beneath it. Governments, legal authorities, and commercial institutions rarely begin systems of economic growth with invention alone. They begin when institutions convert conditions that once belonged to shared human life into resources that can be owned, measured, and exchanged.

Land becomes property; labor becomes wage labor; knowledge becomes data. Rivers that once supplied water freely to surrounding communities now appear in financial markets as tradable assets. Each transformation enlarges the field of economic activity because it reorganizes what was previously common. The narrative of progress celebrates the innovation that follows this conversion; yet the expansion often depends first on the extraction that made the innovation possible. Economic development therefore unfolds through a recurring institutional act: the conversion of shared conditions into organized systems of ownership.

2

The first large transformation occurred when land and labor entered modern economic systems as commodities. Earlier societies cultivated land and organized work through local obligations, customary rights, and communal practices. Modern economies introduced a different arrangement. Legal systems defined land as transferable property; this definition allowed estates, plantations, and industrial sites to circulate within markets.

Industrial production also required a stable supply of labor that could be measured and compensated in monetary terms. Wage contracts fulfilled that requirement. Workers exchanged hours of effort for income; employers calculated production through predictable units of labor.

This institutional reorganization created the foundation of industrial growth. Factories and commercial agriculture did not rely only on machinery; they relied on legal and economic systems that converted land and labor into inputs capable of sustaining continuous production. The Industrial Revolution therefore expanded not only through invention but also through the systematic reorganization of human and natural resources into economic instruments.

3

Industrial expansion soon demanded resources that extended beyond land and labor alone. Factories required concentrated sources of power capable of sustaining mechanical production on a large scale. Coal supplied the first solution; petroleum followed with even greater efficiency.

Extraction industries emerged to supply these fuels. Mining companies developed technologies that could remove coal from deep geological layers; oil firms drilled wells that reached reservoirs beneath land and sea. Railways, pipelines, and shipping routes connected these extraction sites to industrial centers.

Governments and corporations secured access to these resources through territorial agreements, drilling concessions, and strategic alliances that protected shipping routes and energy infrastructure. Industrial powers negotiated drilling rights and controlled shipping corridors that carried fuel across oceans to factories and cities. These arrangements tied distant territories to the energy demands of expanding industrial societies. Energy became the substance that sustained industrial economies; control of energy flows became a measure of geopolitical influence. Economic expansion therefore depended not only on technical invention but also on the ability of States to organize and protect systems of resource extraction across national boundaries.

4

The late twentieth century introduced a transformation that appeared to depart from this material pattern. Digital networks created environments where human activity could be recorded, stored, and analyzed. Companies that operated these networks soon recognized that the information generated through everyday interaction possessed economic value.

Search queries, online purchases, social exchanges, location signals, and browsing histories formed detailed records of behavior. Digital platforms developed algorithms that could process these records and identify patterns within them. Advertising systems used those patterns to match products with likely consumers; businesses purchased access to those predictions because they sought to increase sales.

Individuals who search for information, communicate with friends, or move through cities rarely perceive that these ordinary actions generate the data streams that sustain digital markets. These systems appear impersonal, yet they remain human constructions. Engineers design the platforms, legislators authorize the legal frameworks that permit data collection, and investors finance the infrastructure that organizes this information into profit. The authority of the system therefore rests on decisions made by identifiable actors who participate in its operation. Human behavior becomes a measurable resource within the digital economy, and everyday activity enters systems of calculation that transform ordinary experience into economic input.

5

Artificial intelligence extends this informational system into a new domain. Machine learning systems require vast collections of language, images, and recorded activity. Developers assemble these materials through large data sets that gather written expression, visual material, and behavioral traces from many sources.

Newspapers, books, photographs, academic research, and online conversations become training material for these systems. Computational processes analyze these materials and adjust internal parameters until recognizable patterns of language or perception emerge. The resulting models appear to generate knowledge independently; yet their structure depends on the human expressions that formed the training material.

Collective intellectual activity therefore becomes the substance from which artificial intelligence systems derive their capabilities. Firms that control these systems own the architecture through which this knowledge becomes computational intelligence. Human creativity remains the origin; proprietary systems govern access to the resulting capabilities.

6

The apparent immateriality of this digital environment conceals a substantial physical foundation. Computation requires hardware that conducts electricity, stores information, and performs complex calculations. These devices depend on minerals extracted from the earth.

Copper carries electrical current through circuits and transmission lines. Lithium and cobalt stabilize batteries that power portable systems. Rare earth elements create magnets that operate within turbines and electronic components. Silicon forms the basis of semiconductor fabrication.

Mining operations extract these materials from geological deposits; refining facilities separate and process them into usable forms; manufacturing plants assemble them into processors, memory systems, and data centers. The digital economy therefore rests on a chain of material production that extends from mineral extraction to computational infrastructure.

States compete intensely within this system because control of mineral supply chains influences technological capacity. Countries rich in copper, lithium, and rare earth elements negotiate new partnerships with industrial powers that require these materials. Technological development therefore reconnects digital innovation with the geopolitical realities of resource extraction.

7

Systems built on extraction rarely present themselves through that language. Advocates of each technological era often describe development as an inevitable progression that no society can alter. Industrialization carried that description; petroleum dependence carried it as well; digital expansion repeated the same claim. Phrases such as “the digital future cannot be stopped” or “artificial intelligence will transform everything” present technological systems as unavoidable outcomes.

This description performs an important function. When a system appears inevitable, criticism of its structure loses urgency. Public discussion shifts from examining how institutions organize resources toward adjusting to the system those institutions have already created.

Citizens repeat these expressions in public discussion and private conversation; by doing so they reinforce the appearance that technological systems operate beyond human choice. This repetition relieves individuals of the burden of questioning the structures that govern economic life and allows systems of extraction to continue without sustained scrutiny. Yet technological systems do not arise independently of political decision. Governments establish property rights, regulate industries, and authorize investment structures. Firms design platforms, infrastructure, and markets that channel resources into systems of production. The narrative of inevitability obscures these arrangements. It encourages societies to accept technological systems as natural developments rather than as institutions shaped by deliberate choices.

8

The historical sequence reveals a recurring pattern. Each stage of modern growth identifies conditions of life that institutions can reorganize into economic resources. Land, labor, energy, information, and knowledge have entered this sequence in successive eras.

These resources originate within the shared environment of human society and the natural world. Communities cultivate land; workers apply skill and effort; generations contribute knowledge and expression. Economic institutions establish mechanisms that reorganize these shared conditions into systems of ownership. Property law assigns control over land; industrial infrastructure organizes labor and energy; digital platforms collect behavioral information; computational systems assemble human knowledge into proprietary models.

The tension within this process becomes visible when the resource cannot plausibly be described as private in origin. Water offers the clearest example. No individual produces it, and every society depends on it. Yet financial and legal systems increasingly treat access to water as an asset that can be owned, traded, or controlled through investment structures. When institutions transform a resource so obviously common into a vehicle of ownership, the separation between origin and control becomes unmistakable.

Economic institutions do not operate apart from political authority. States establish the legal frameworks that transform common resources into systems of ownership and production. Through those frameworks, governments grant access to land, energy, information, and technological infrastructure. These arrangements generate wealth for firms and investors who operate within them; they also strengthen the strategic position of the States that oversee those systems.

Political communities therefore confront a difficult responsibility. They must decide whether the resources that sustain collective life remain subject to public authority or become instruments of concentrated ownership.

Governments often treat common resources not only as foundations of economic activity but also as instruments of geopolitical advantage. Rival States compete to secure control over these resources and the industries that depend on them. Ideological disputes accompany this competition; yet the underlying structure remains similar across competing systems. Prosperity and influence arise from institutions that convert common resources into concentrated forms of wealth and authority.

Modern societies continue to pursue innovation and expansion; the history of their development shows that growth has repeatedly depended on this conversion. Progress expands production and knowledge; yet it often detaches ownership from the common resources that made that expansion possible. The enduring question is whether societies can pursue advancement while maintaining alignment between the resources that belong to all and the systems that govern their use.

*